In the "Robotorium" in Johns Hopkins University's Hackerman Hall, the robot revolution has officially begun. On a recent afternoon, robots zipped through hallways, navigated obstacle courses, and even solved jigsaw puzzles.

These activities were part of engineering students' demonstration of robotics projects they conducted for their graduate-level Robot Systems Programming course, offered by the Whiting School of Engineering. Each year, students must teach a robot a set of new skills for the course's capstone project. Working in small teams, they can select from a variety of platforms—robotic arms, underwater and aerial drones, electric cars—but they must build a full-scale robotics system that can perform at least two tasks, one of which must be done autonomously.

Inspired by real-world problems, this year's cohort designed robots that can be used in a variety of applications—a list that just keeps growing with each class.

"Our students have already studied advanced mathematics, modeling, algorithms, and programming for robotics. But for most, this is the first time they are challenged to conceive of and develop a full-scale robotic system from the ground up, developing their own original software and hardware and utilizing a vast ecosystem of open-source hardware and software," said Louis Whitcomb, professor of mechanical engineering and computer science, who created and taught the class this year, along with teaching assistants Chia-Hung Lin, Gabe Bariban, and Han Shi.

The following three projects (out of 10 total projects) give an exciting glimpse of what Hopkins engineers can create at the cutting edge of robotics:

Task-Drive Swarm

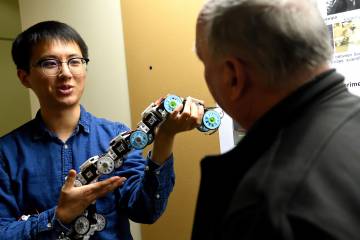

They say many hands make light work, and students Kevin Chang, Joseph Chung, Minsung Chris Hong, and Panth Patel prove that old adage is true not just for humans. The team developed a "swarm" of robots that work together to search their environment for new objects. The "leader" robot uses computer vision to locate new objects in a given area and sends location data to two sub-robots. The sub-robots are then dispatched to investigate the new objects.

Video credit: Panth Patel

Getting multiple robots to communicate and collaborate with one another proved difficult. "Our biggest challenge was overcoming Wi-Fi network latency, or not having enough bandwidth for all the information," said Patel. "Our system ran smoothly when we had two robots, but when we added in the third, we saw a lag with the data telemetry."

Multi-robot systems could have many helpful uses in everyday life. "We imagine this type of robot system could be very useful for delivery services," said Chung. "The leader robot could be an autonomous delivery truck that identifies addresses, and the sub-robots would make the deliveries."

TurtleBoat

Robotics is a fun and exciting way to introduce young children to engineering, but robot parts can be expensive, making it difficult for some schools to offer hands-on robotic activities.

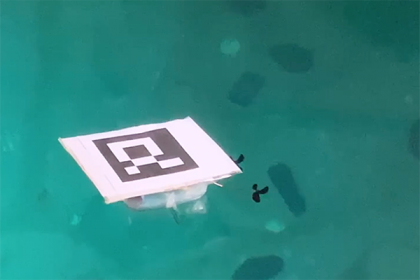

Image caption: The TurtleBoat prototype gets its feet wet

The TurtleBoat, created by Alexander Cohen and Florian Pontani, is an affordable robot boat that will help young students learn to maneuver aquatic robots. The battery-powered TurtleBoat, whose hull is made from a common Tupperware container, has an on-board computer "brain" that controls two electrically-actuated propellers, navigation sensors, and a low-cost laser/camera system for surveying the seafloor.

"We wanted a robust design that was inexpensive and easy to build, but also fun to use," said Cohen. "When you introduce kids to robots, the first thing they ask is what can you do with it? We chose to make a robotic boat because there are so many things you can do with it. Marine robots can be utilized to map the ocean floor, track underwater objects, and study marine life."

Smart Tissue Autonomous Robot

Robots in the operating room help surgeons perform safer, less invasive surgical procedures. The Smart Tissue Autonomous Robot, or STAR, created by researchers at the Children's National Health System, University of Maryland, and Johns Hopkins University, is a surgical robot designed to suture soft tissue. While the device has been successful in animal surgeries, it still needs to be tested on human subjects—and that's where Hopkins engineering graduate students Wei-Lun Huang and Yeping Wang are stepping in, with the guidance and mentorship of Simon Leonard, an assistant research professor in computer science.

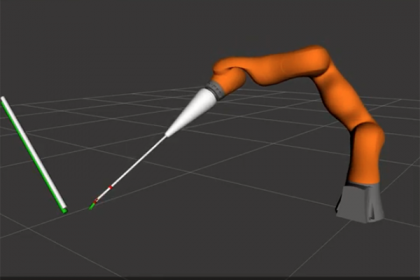

Image caption: A still from the STAR system simulation

The STAR system will need a more complex motion planning algorithm to perform surgery in a living body. Huang and Wang have developed a simulation platform to test how these algorithms will perform in real life. Ultimately, the project could help researchers get a step closer to using STAR on real patients.

"Our challenge was integrating simulation software with an existing system," said Huang. "Our software needs to mimic the main functions of the STAR system as closely as possible. We spent a lot of time trying to understand and model every aspect of the STAR system."

Posted in Science+Technology

Tagged robotics