- Name

- Barbara Benham

- bbenham1@jhu.edu

- Name

- Michael Hughes

- mhughe18@jhu.edu

- Office phone

- 410-614-4919

A study led by scientists at Johns Hopkins Bloomberg School of Public Health suggests that advanced algorithms working from large chemical databases can predict a new chemical's toxicity better than standard animal tests. The computer-based approach could replace many animal tests commonly used during consumer product testing. It could also evaluate more chemicals than animal testing, a change that could lead to wider safety assessments.

For the study, which appears online today in the journal Toxicological Sciences, the researchers mined a large database of known chemicals they developed to map the relationships between chemical structures and toxic properties. They then showed that one can use the map to automatically predict the toxic properties of any chemical compound more accurately than a single animal test would do.

"These results are a real eye-opener—they suggest that we can replace many animal tests with computer-based predictions and get more reliable results," says principal investigator Thomas Hartung from the Department of Environmental Health and Engineering. He directs the Center for Alternatives to Animal Testing, which seeks to promote humane science by finding and developing alternatives to the use of animals in research, product safety testing, and education.

Owing to costs and ethical challenges, only a small fraction of the roughly 100,000 chemicals in consumer products has been comprehensively tested. Mice, rabbits, guinea pigs, and dogs annually undergo millions of chemical toxicity tests in labs around the world. Such testing is opposed on moral grounds by large segments of the public and, given its high costs and the uncertainties about the testing results, it is also unpopular with product manufacturers.

"A new pesticide, for example, might require 30 separate animal tests, costing the sponsoring company about $20 million," Hartung says.

The most common alternative to animal testing is a process called read-across, in which researchers predict a new compound's toxicity based on the known properties of chemicals that have a similar structure. Read-across is much less expensive than animal testing, yet requires expert evaluation and somewhat subjective analysis for every compound of interest.

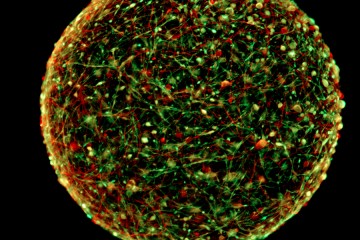

As a first step toward optimizing and automating the read-across process, Hartung and colleagues two years ago assembled the world's largest machine-readable toxicological database. It contains information on the structures and properties of 10,000 chemical compounds, based in part on 800,000 separate toxicology tests.

"There is enormous redundancy in this database," Hartung says. "We found that often the same chemical has been tested dozens of times in the same way, such as putting it into rabbits' eyes to check if it's irritating."

This redundancy, however, gave the researchers information they needed to develop a benchmark for a better approach. The team enlarged the database and used machine-learning algorithms, with computing muscle provided by Amazon's cloud server system, to read the data and generate a "map" of known chemical structures and their associated toxic properties. They developed related software to determine precisely where any compound of interest belongs on the map and whether, based on the properties of compounds "nearby," it is likely to have toxic effects such as skin irritation or DNA damage.

The most successful version of the toxicity-prediction tool the team developed was on average about 87 percent accurate in reproducing the consensus of animal test results across nine common tests. By contrast, the repetition of the same animal tests in the database was only about 81 percent accurate. In other words, any given test had only an 81 percent chance, on average, of obtaining the same result for toxicity when repeated.

"Our automated approach clearly outperformed the animal test in a very solid assessment using data on thousands of different chemicals and tests," Hartung says. "So it's big news for toxicology."

The U.S. Food and Drug Administration and the Environmental Protection Agency have begun formal evaluations of the new method, to test whether read-across can substitute for a significant proportion of the animal tests currently used to evaluate the safety of chemicals in foods, drugs, and other consumer products. The researchers are already using the tool to help some large corporations, including major technology companies, determine whether they have potentially toxic chemicals in their products.

"One day perhaps, chemists will use such tools to predict toxicity even before synthesizing a chemical so that they can focus on making only nontoxic compounds," Hartung says.

Posted in Health, Science+Technology

Tagged environmental health, animals, toxicology